Hasan Nazim Genc

About Me

I'm a PhD candidate at the University of California, Berkeley, where I'm advised by Krste Asanović (and also collaborate very closely with Sophia Shao). I co-design hardware and software for dense and sparse DNNs (and other types of workloads), and release tools to help other people do that more easily.

My research interests include the design of hardware accelerators for DNN workloads, as well as the design of programming interfaces for such accelerators. I am also interested in agile hardware design methodologies.

You can find my resume here, or a full list of my publications here.

Work Experience

- NVIDIA, Research Intern, 2022

- Anyscale, Software Engineering Intern, 2021

- Amazon Web Services, SDE Intern, 2020

- Intel, Software Engineer Intern, 2017

- CAPSHER Technology, Software Developer Intern, 2016

Education

- University of California, Berkeley, PhD (in progress)

- University of Texas at Austin, BS, 2018

Teaching

- CS 152: Computer Architecture, Spring 2022

- EE 290: Hardware for Machine Learning, Spring 2020

Research Interests

Designing Accelerators

I am extremely interested in frameworks and DSLs that make it easier to design, explore, and evaluate domain-specific accelerators. As part of the Gemmini project, I explored how architects and researchers can investigate the full impact of SoC resource contention, programming-stack inefficiencies, context switches, virtual address translation, and other "full-system" interactions on the performance, efficiency, and correctness of DNN workloads running on hardware accelerators.

Recently, I have also been building a platform which enables architects to rapidly specify and generate accelerators for sparse workloads. This work will soon be open-sourced, along with a paper discussing our findings.

Selected Publications: DAC 2021 (Best Paper Award), ESSCIRC 2021

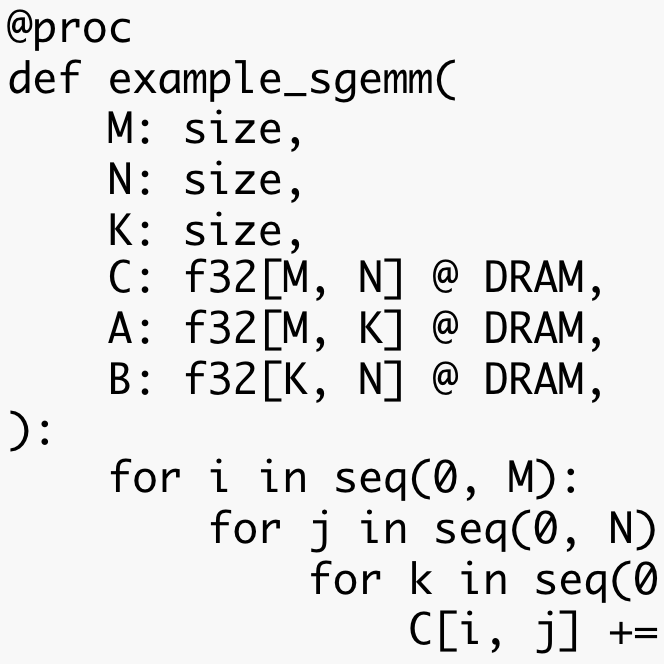

Scheduling

Making hardware is only a small part of the story; writing software that runs efficiently on it can be much harder! I've been involved in various projects that attempt to map software workloads efficiently onto specialized hardware accelerators, whether through automated techniques or through more expressive programming languages.

Selected Publications: HPCA 2023, PLDI 2022, arXiv 2020

Transformers

Transformers' high memory bandwidth requirements, diverse activation functions, and (in some cases) poor scalability can make them challenging to run efficiently on modern hardware. I've helped investigate the "full-stack optimization" of transformer workloads, from model configuration down to hardware design and software mapping. I've also gotten my hands dirty implementing my own hardware-friendly sparsity formats for transformer workloads.

Selected Publications: DAC 2023, arXiv 2023

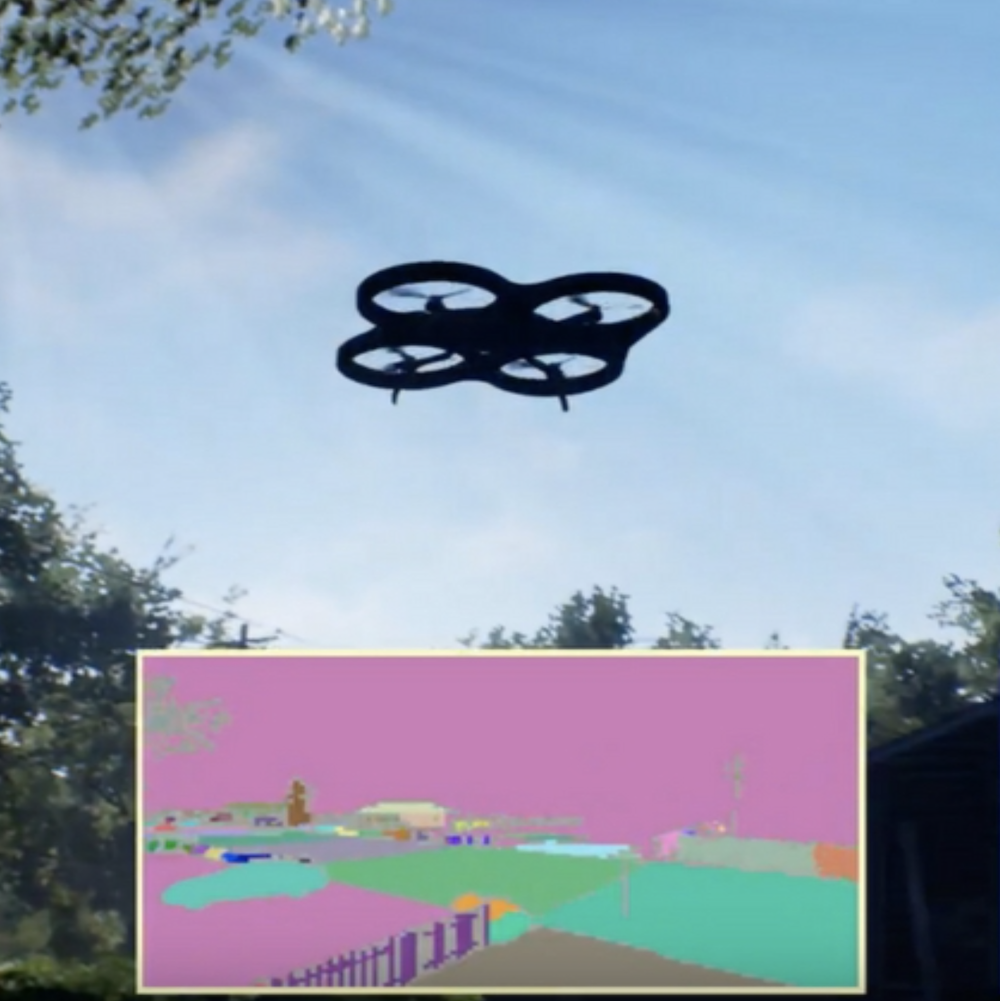

Drones

Back when I was an undergrad, I worked with Vijay Janapa Reddi to investigate the compute requirements of autonomous robots (and flying drones in particular). Robots have a lot of interesting compute trade-offs that may be counter-intuitive to someone who has only worked on "stationary" computers like laptops or servers in the cloud. We created a benchmarking platform, MAVBench, to help computer architects make sense of those trade-offs.

Selected Publications: TOCS 2022, MICRO 2018, IEEE Micro 2018, IEEE Micro 2017

Quotes

Omar Khayyam, in The Rubaiyat:

XXVII

Myself when young did eagerly frequent

Doctor and Saint, and heard great argument

About it and about: but evermore

Came out by the same door where in I went.XXVIII

With them the seed of Wisdom did I sow,

And with mine own hand wrought to make it grow;

And this was all the Harvest that I reap'd--

"I came like Water, and like Wind I go."...

XXXII

There was the Door to which I found no Key;

There was the Veil through which I might not see:

Some little talk awhile of Me and Thee

There was--and then no more of Thee and Me.Yunus Emre, in a poem about an unfortunate tree:

Dağdan kestiler hezenim

Bozuldu türlü düzenim

Ben bir usanmaz ozanım

Derdim var inilerimDülgerler her yanım yoldu

Her azam yerine kondu

Bu iniltim Haktan geldi

Derdim vardır inilerimFrancis Bacon, in his Apophthegms:

Solon when he wept for his son's death, and one said to him, “Weeping will not help;” answered, “Alas, therefore I weep, because weeping will not help.”

Peter W. Schramm:

“But where are we going?” I asked.

“We are going to America,” my father said.

“Why America?” I prodded.

“Because, son. We were born Americans, but in the wrong place,” he replied.